BRepCLIP

Contrastive Multimodal Pretraining on BRep Primitives for CAD Understanding

DFKI Kaiserslautern · RPTU Kaiserslautern-Landau · MindGarage

Overview

Native CAD structure as the substrate for multimodal understanding.

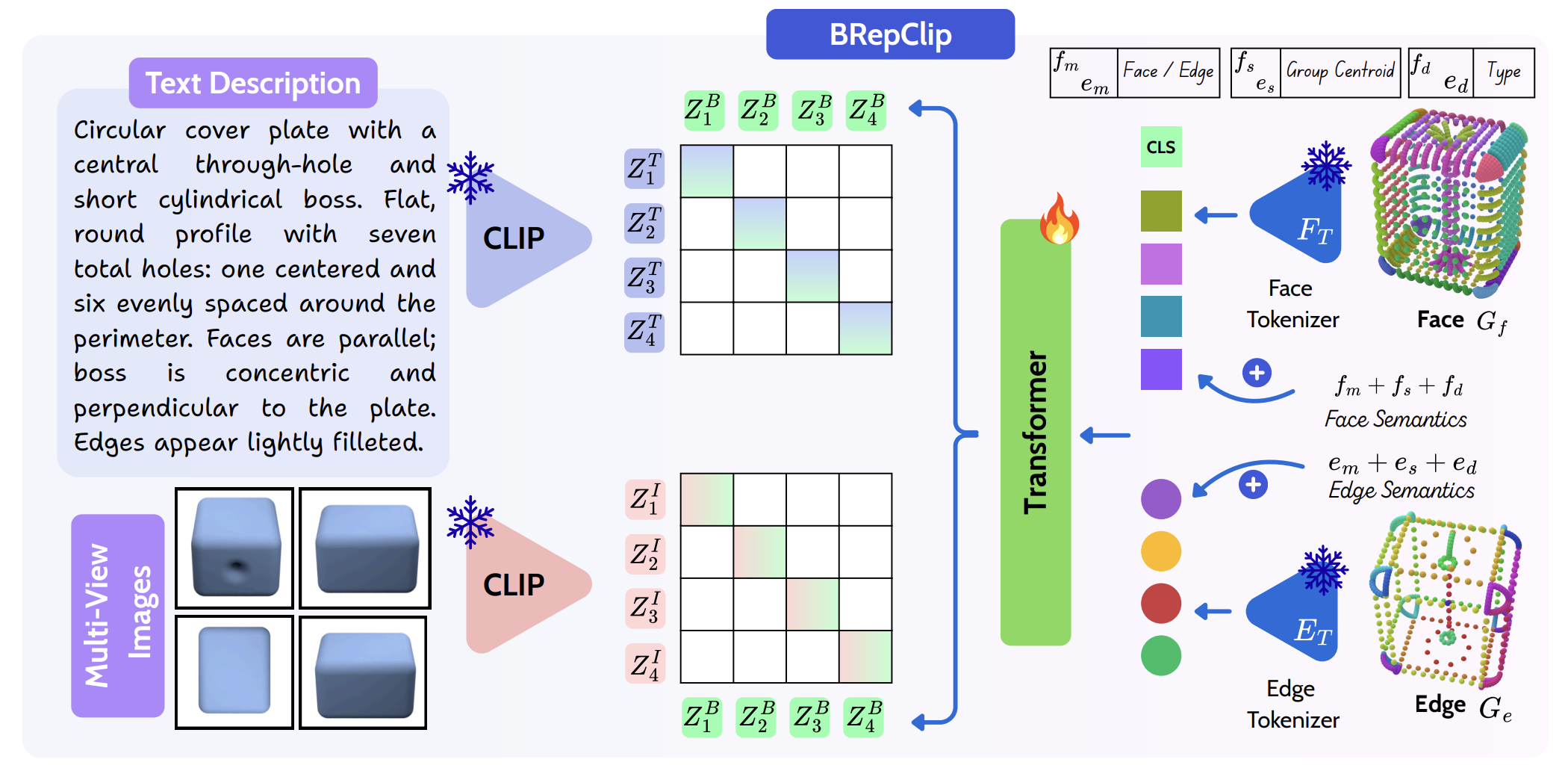

Point clouds discard analytic surface types, curve primitives, and topology. BRepCLIP keeps faces and edges as first-class CAD entities, tokenizes them with separate face and edge vocabularies, and trains a transformer encoder for open-vocabulary CAD retrieval and evaluation.

Core Contributions

What BRepCLIP brings

Represents CAD models through typed face and edge primitives rather than unordered points.

Uses separate codebooks for surface geometry and curve geometry.

Aligns BRep geometry with text descriptions and multiview image renderings.

Provides a structure-aware similarity metric for text- and image-conditioned CAD generation.

Pipeline

How BRepCLIP works

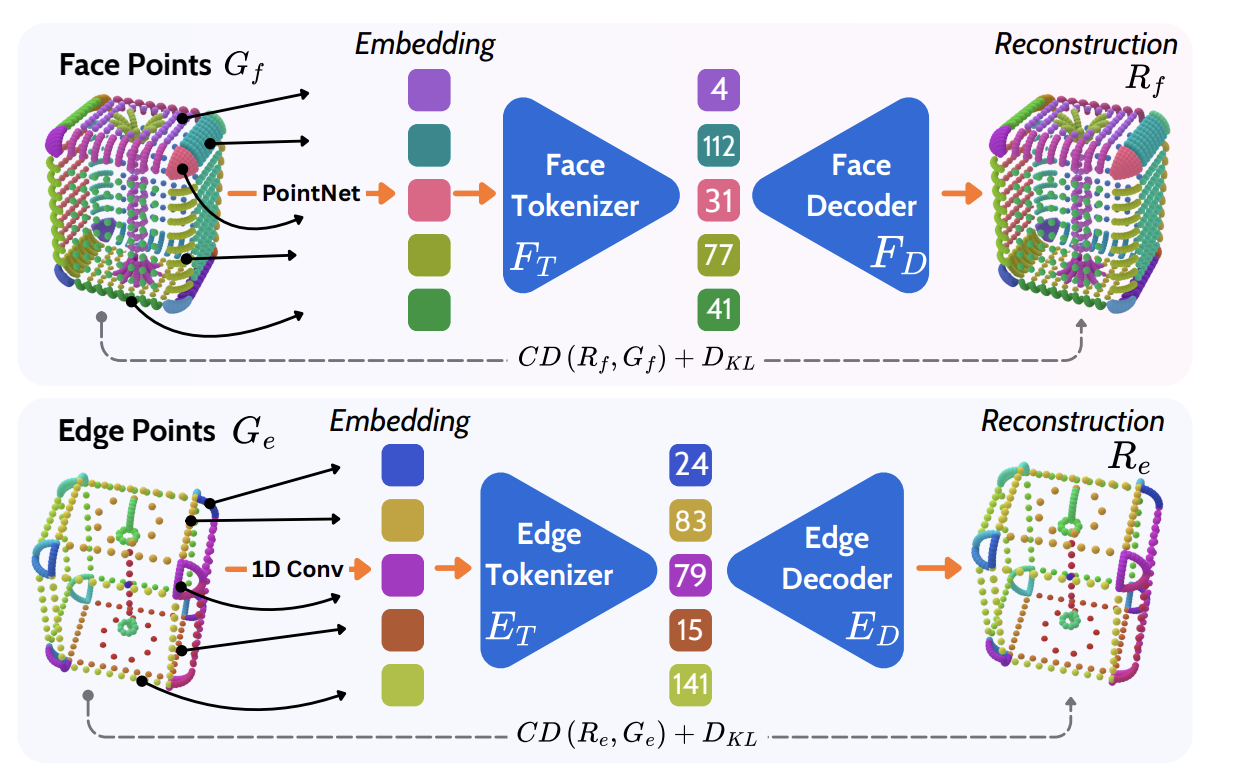

The pipeline is intentionally split into two stages: primitive tokenization first, followed by contrastive alignment with text and image encoders.

Hybrid face-edge tokenization

Contrastive BRep-text-image alignment

Applications

One embedding space, three CAD workflows.

BRepCLIP is trained as a representation model, but its embedding can be used directly in practical CAD workflows: retrieval, classification, and generation evaluation.

Experiment

Quantitative results

BRepCLIP is evaluated across text-to-CAD retrieval, zero-shot CAD classification, and CAD generation evaluation. Retrieval CD is scaled by 103.

Text-to-CAD Retrieval

Given a text query, models retrieve the matching CAD object from a full gallery using cosine similarity in the shared embedding space.

| Method | ABC Top-1 | ABC Top-5 | ABC Top-20 | CADParser Top-1 | Automate Top-1 | CD ↓ |

|---|---|---|---|---|---|---|

| Point-BERT | 2.60 | 9.36 | 22.72 | 1.10 | 0.91 | 61.56 |

| PointNet | 3.31 | 12.07 | 29.60 | 0.40 | 3.33 | 62.27 |

| PointMLP | 0.90 | 3.50 | 9.50 | 1.10 | 1.02 | 68.43 |

| BRepEncoder | 4.30 | 16.30 | 33.90 | 2.10 | 4.82 | 61.11 |

| ULIP | 2.30 | 4.00 | 12.20 | 0.70 | 0.92 | 63.48 |

| OpenShape | 6.12 | 18.17 | 34.36 | 4.10 | 7.60 | 71.63 |

| BRepCLIP | 8.59 | 24.52 | 47.89 | 5.00 | 9.42 | 58.16 |

Zero-Shot Classification on FabWave

CAD embeddings are matched directly to class-level text descriptors without fine-tuning.

| Method | Top-1 | Top-5 | Top-10 |

|---|---|---|---|

| Point-BERT | 17.34 | 40.21 | 56.04 |

| PointNet | 15.74 | 38.78 | 54.37 |

| PointMLP | 18.80 | 41.00 | 59.02 |

| BRepEncoder | 21.81 | 43.40 | 60.74 |

| ULIP | 21.65 | 47.28 | 60.62 |

| MixCon3D | 34.10 | 63.93 | 78.18 |

| OpenShape | 33.58 | 68.73 | 81.73 |

| BRepCLIP | 38.62 | 70.28 | 86.71 |

Evaluation

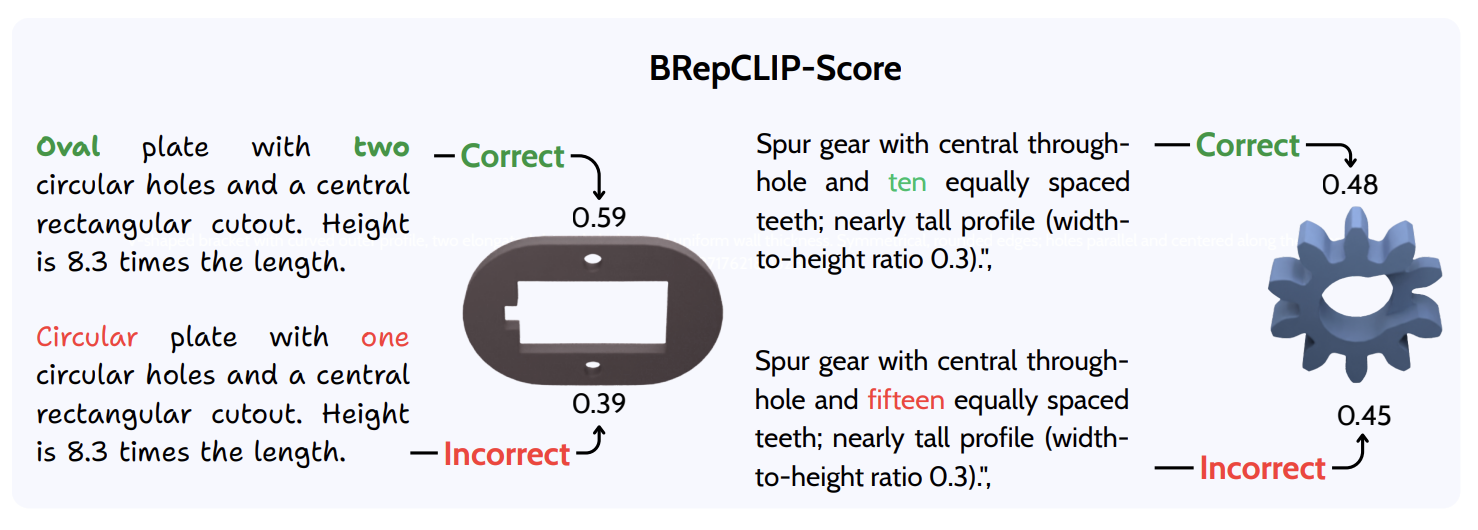

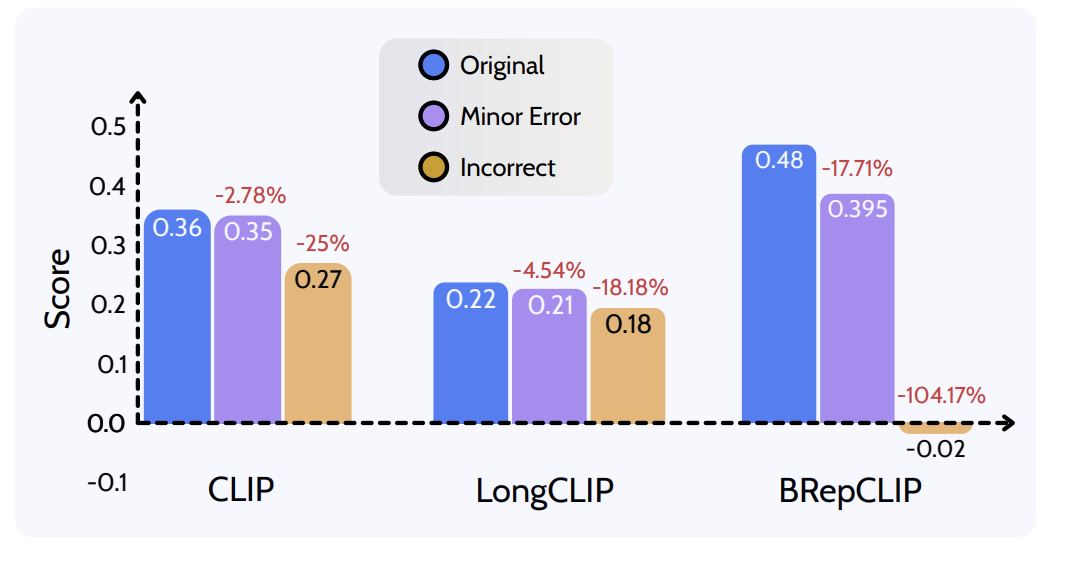

BRepCLIP-Score

BRepCLIP-Score evaluates whether generated CAD geometry matches a text prompt using the learned BRep embedding, making the metric sensitive to topology, hole counts, surface types, and edge structure.

Geometry distance

Chamfer Distance is computed between point samples from the generated CAD model and the target CAD geometry. Lower values indicate closer global shape reconstruction.

Image-text similarity

We render multiview images of each generated CAD model and compare them with the prompt using CLIP image and text embeddings. This captures visual alignment, but not native BRep structure.

Prompt-to-BRep similarity

The prompt embedding is compared directly with the generated CAD model's BRep embedding using cosine similarity, so errors in holes, surfaces, edges, and topology affect the score.

Semantic faithfulness

Five CAD designers and GPT-based evaluators score multiview renderings from 0 to 10 based on how faithfully each generated CAD model matches the input caption.

Text-to-CAD Generation Benchmark

BRepCLIP-Score is compared against CD, CLIP score, human ratings, and GPT ratings on generated CAD outputs from recent text-to-CAD methods.

| Method | CD ↓ | CLIP Score ↑ | Human Score ↑ | GPT Score ↑ | BRepCLIP Score ↑ |

|---|---|---|---|---|---|

| Ground Truth | - | 0.37 | 9.7 | 9.8 | 0.61 |

| DeepCAD | 86.54 | 0.24 | 2.2 | 2.4 | 0.15 |

| Text2CAD | 86.54 | 0.26 | 3.6 | 3.5 | 0.16 |

| CADRille | 155.80 | 0.26 | 3.5 | 3.7 | 0.16 |

| Text2CQ (Q3B) | 68.15 | 0.33 | 5.0 | 4.9 | 0.31 |

| Text2CQ (GL) | 71.27 | 0.32 | 4.6 | 4.5 | 0.25 |

| Text2CQ (CG) | 77.91 | 0.31 | 4.1 | 3.9 | 0.22 |

| CADFusion | 56.36 | 0.29 | 5.5 | 5.8 | 0.35 |

Collaborators

Contact and profiles

Author profile links follow the public project/profile pages used in related CAD generation work. Publicly available emails are listed where available.

Muhammad Usama

DFKI · RPTU Kaiserslautern-Landau · MindGarage

Didier Stricker

Scientific Director, DFKI Augmented Vision

Mohammad Sadil Khan*

Equal contributing supervisor · DFKI / RPTU

Muhammad Zeshan Afzal*

Equal contributing supervisor · DFKI / MindGarage